An Inventory of the Unseen Consequences of AI in Libraries

By: Meghan Voll

I’m an avid user of the library reading app, Libby. In fact, I’ll credit it with a revived interest in supporting my local library, and the reason why my decades-old library card isn’t gathering dust in my wallet anymore (at least, digitally). However, recent changes to the app’s corporate position and implementation of design features have prompted some harsh criticism from its users. My question: why is this? Having followed this thread for a while, I’d like to speculate and create an inventory of the potential harms of implementing artificial intelligence in the collections of libraries online. The following is not necessarily within my purview as a researcher of libraries, but my thoughts as a user, and a data harms researcher.

For the uninitiated, Libby is a free mobile reading application developed by OverDrive. The app allows users to borrow digital books, audiobooks, and magazines from their local libraries (provided the library is signed up with the app). Using their library card, users can borrow and place holds on titles they’d like to read or listen to. The app even syncs across devices, including Kindle. For me, the app is convenient and lets me read most books I’m interested in without having to go to the physical space or purchase a title I may not end up liking.

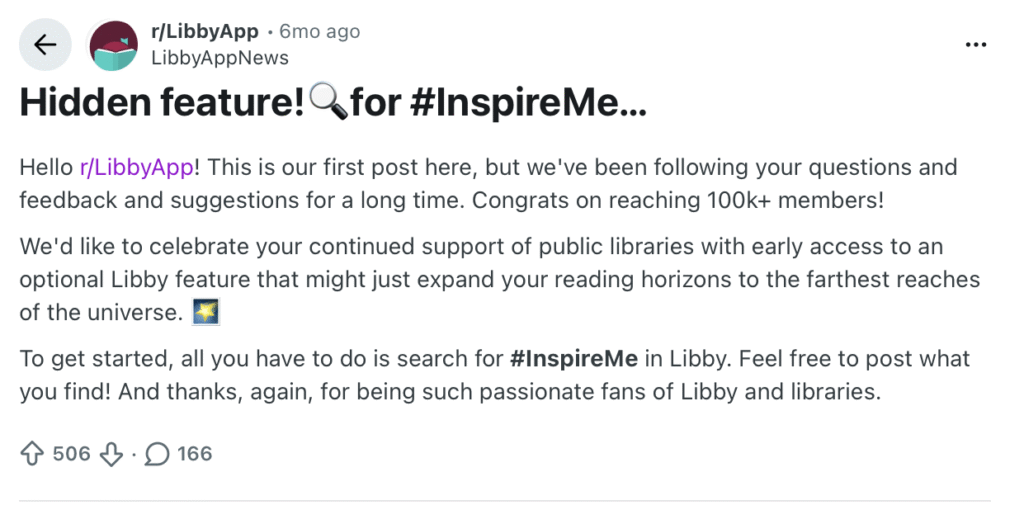

Six months ago, the Libby corporate account posted the following on the app’s official sub-reddit: r/LibbyApp. The post encouraged users to type in the hashtag #inspireme for access to a new feature which promised to “expand their reading horizons”. The comments were less than enthusiastic. Users warned others that the promised “expansion” was a generic AI-recommendation feature that suggests potential titles a reader might like based on their history. Many users also expressed frustration that trying the feature changed the format of the app with no way to change it back (it is unclear if this has been fixed since then).

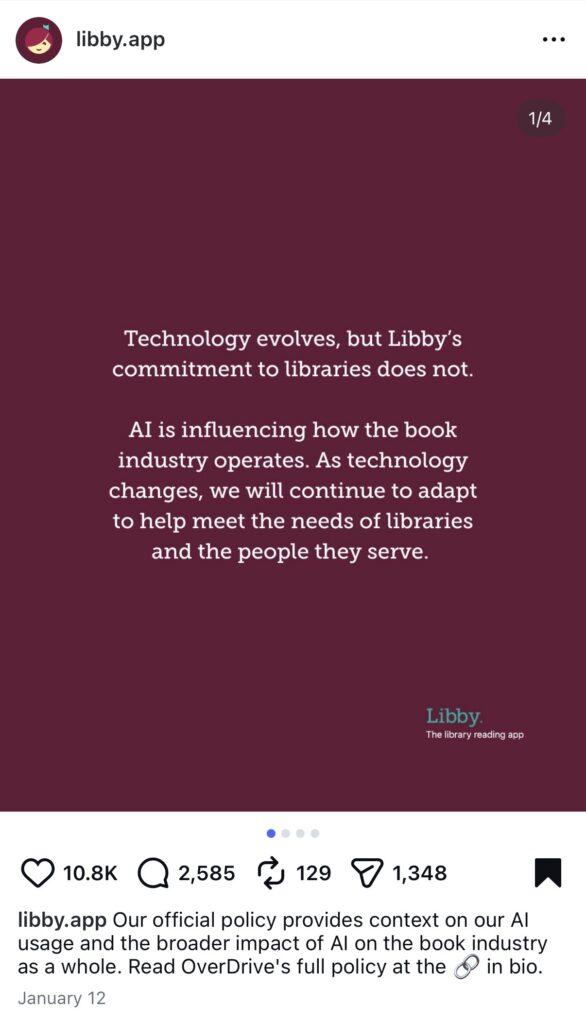

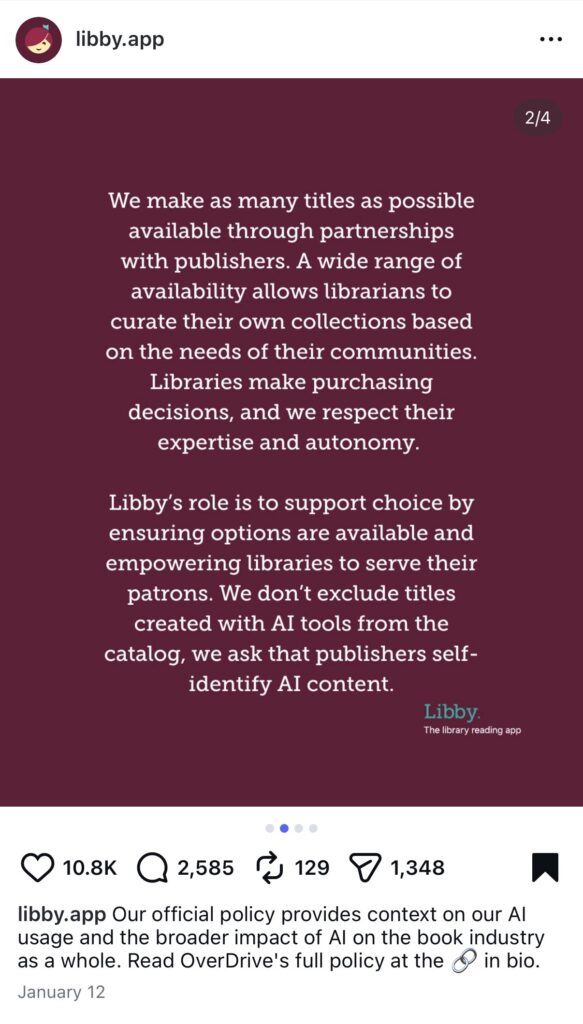

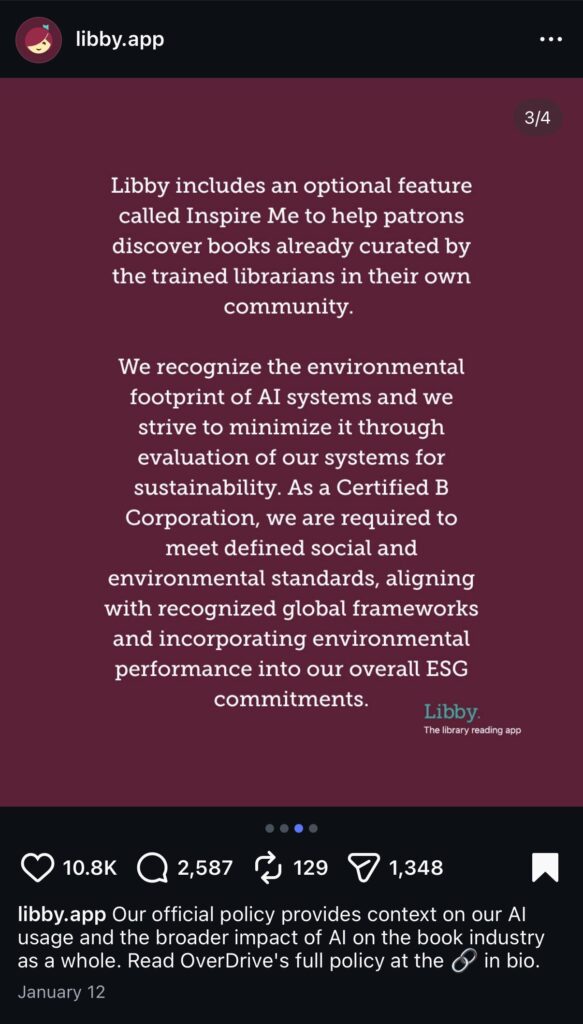

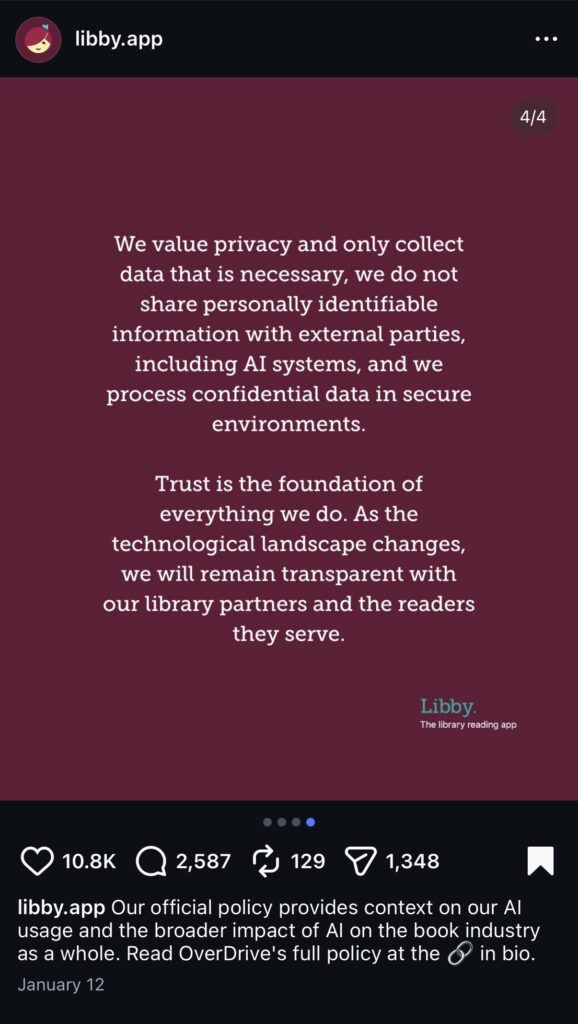

Three months ago, Libby announced on their official Instagram account that they were committed to implementing new artificial intelligence features as they became available. The app claimed they would allow AI-assisted texts on their platform, provided authors clearly disclosed when AI was used. This announcement also received negative reception. Users argued that Libby’s decision was a “bold” move and that self-disclosure of AI-assisted texts was not an effective means of regulation. Many users also worried about what the app’s next moves might be as more AI features became available.

Curious, I reached out to a colleague in Library Sciences about this. I asked why the Libby situation might be an issue, and why it might anger so many people. Most of all, I wanted to know what effect Libby’s decisions would have on libraries. Certainly, I could see why their decision could be an issue for librarians, but why users? It was interesting that my colleague suggested that an AI feature such as #inspireme wasn’t much different from a recommendation algorithm. That, they said, wasn’t harmful in itself. Lots of apps do it. However, they did feel that it would hinder the autonomy of readers to find books themselves. My colleague also felt that lesser known books in certain genres, such as fantasy or young-adult fiction, would be buried under the weight of more popular titles (e.g. The Hunger Games, Divergent, etc.).

Now, is this a harm? In their paper, Nathalie DiBerardino and our very own Dr. Luke Stark argue that AI ethics need to distinguish between “harm” (as distinctly negative outcomes), and “wrongs” (as a violation of rights or relational equality). As a data harms researcher, I agree there also needs to be a distinction along these lines. What my colleague described aren’t necessarily harms or wrongs, but hidden among them are the foundations or the potentiality for them. It is this potentiality that does a disservice to libraries and those who use them.

In my research on data harms and aging, it is important to talk about potentiality and its related vocabulary because it can reveal patterns that indicate when and where a harm may emerge. It is here that I return to the question of why Libby’s pro-AI stance has angered its user-base, and the inventory of harms that it can cause. There lies a potential for certain harms—and at the very least wrongdoing— in the disservices my colleague describes.

One set of harms are filter bubbles and echo chambers for the user. Have you ever searched for a product you’re interested in and had advertisements for that permeate every online space you visit? The same could happen in the case of Libby. Certain genres could be omitted if the books that are recommended to users are based on their previous reading history. A user’s autonomy and ability to navigate the library is removed, without a way to tell the AI whether their recommendations are a poor or bad fit.

While I’m not a researcher in the field of library sciences, I have no doubt there are harms specific to librarians and the services they provide in curating collections for the public. Unfortunately, this blog post can only speculate on those. I leave it to others more informed than me to pick up on this part of the inventory.

There is also the question of data collection. I find it hard to believe that data collection will stop at the introduction of artificial intelligence features that can recommend your next read, despite what Libby claims. It is this point that reveals another potential for harm. There is the question of whether we, as a society of readers, want the cultural landscape of the library to change. Do we want the curiosity these institutions inspire to shift? I believe the question of cultural landscapes is a profound question to ask in the age of social media and phone addiction (more on this in a future blog post). The fact is that once we change the landscape through the implementation of AI features (good or bad), there is no going back. For example, the widespread adoption of social media revealed a lot of disservices and harms, such as the loss of social connection which can cause poor mental and physical health. Will the implementation of these features help or harm readers, and the libraries they visit in the future? It is imperative we start asking these questions more seriously as artificial intelligence seeks to permeate more and more in everyday life.

There is more potential for wrongdoing and harm than this blog post can list. Libby readers also cited concerns about AI’s impact on the environment, and its misalignment with the values of libraries as public institutions aimed at benefitting and supporting their communities. Indeed, such a misalignment does fit the very visible corporate agenda that often comes with the implementation of these features. It is my opinion that this is also an important question to consider, but that the question of the potentiality for harms is an equally paramount concern to consider. As we become more aware of the potential harms of AI, I believe this does warrant frustration and anger. As features such as Libby’s #inspireme begin to infringe on the user experience, those using it need to ask themselves: will the positive effects of it outweigh the negative? More importantly, is this a change that we want to make that will lead to better outcomes?